|

How do one call packages from spark to be utilized for data operations with R?

AWS EMR bootstrap to install R packages from CRAN. This bootstrap is useful if you want to deploy SparkR applications that run arbitrary code on the EMR cluster's workers. The R code will need to have its dependencies already installed on each of the workers, and will fail otherwise. This is the case if you use functions such as gapply or dapply. How to use the bootstrap. Jun 19, 2015 So it looks like by setting SPARKRSUBMITARGS you are overriding the default value, which is sparkr-shell.You could probably do the same thing and just append sparkr-shell to the end of your SPARKRSUBMITARGS. This is seems unnecessarily complex compared to depending on jars so I've created a JIRA to track this issue (and I'll try and a fix if the SparkR people agree with me).

example i am trying to access my test.csv in hdfs as below

but getting error as below:

i tried loading the csv package by below option

but getting the below error during loading sqlContext

Any help will be highly appreciated.

san71san71

1 Answer

So it looks like by setting

SPARKR_SUBMIT_ARGS you are overriding the default value, which is sparkr-shell. You could probably do the same thing and just append sparkr-shell to the end of your SPARKR_SUBMIT_ARGS. This is seems unnecessarily complex compared to depending on jars so I've created a JIRA to track this issue (and I'll try and a fix if the SparkR people agree with me) https://issues.apache.org/jira/browse/SPARK-8506 .

Note: another option would be using the sparkr command +

--packages com.databricks:spark-csv_2.10:1.0.3 since that should work.

HoldenHolden

Not the answer you're looking for? Browse other questions tagged rapache-sparksparkr or ask your own question.

I have the last version of R - 3.2.1. Now I want to install SparkR on R. After I execute:

I got back:

I have also installed Spark on my machine

How I can solve this problem?

GuforuGuforu

4 Answers

You can install directly from a GitHub repository:

You should choose tag (

v2.x.x above) corresponding to the version of Spark you use. You can find a full list of tags on the project page or directly from R using GitHub API:

If you've downloaded binary package from a downloads page R library is in a

R/lib/SparkR subdirectory. It can be used to install SparkR directly. For example:

You can also add R lib to

.libPaths (taken from here):

Finally, you can use

sparkR shell without any additional steps:

Edit

According to Spark 2.1.0 Release Notes should be available on CRAN in the future:

Standalone installable package built with the Apache Spark release. We will be submitting this to CRAN soon.

You can follow SPARK-15799 to check the progress.

Edit 2

While SPARK-15799 has been merged, satisfying CRAN requirements proved to be challenging (see for example discussions about 2.2.2, 2.3.1, 2.4.0), and the packages has been subsequently removed (see for example SparkR was removed from CRAN on 2018-05-01, CRAN SparkR package removed?). As the result methods listed in the original post are still the most reliable solutions.

Edit 3

OK,

SparkR is back up on CRAN again, v2.4.1. install.packages('SparkR') should work again (it may take a couple of days for the mirrors to reflect this)

zero323zero323

SparkR requires not just an R package but an entire Spark backend to be pulled in. When you want to upgrade SparkR, you are upgrading Spark, not just the R package. If you want to go with SparkR then this blogpost might help you out: https://blog.rstudio.org/2015/07/14/spark-1-4-for-rstudio/.

It should be said though: nowadays you may want to refer to the sparklyr package as it makes all of this a whole lot easier.

It also offers more functionality than SparkR as well as a very nice interface to

dplyr.

cantdutchthiscantdutchthis

I also faced similar issue while trying to play with SparkR in EMR with Spark 2.0.0. I'll post the steps here that I followed to install rstudio server, SparkR, sparklyr, and finally connecting to a spark session in a EMR cluster:

wget https://download2.rstudio.org/rstudio-server-rhel-0.99.903-x86_64.rpm

then install using

yum install

sudo yum install --nogpgcheck rstudio-server-rhel-0.99.903-x86_64.rpm

finally add a user to access rstudio web console as:

sudo su

sudo useradd username

sudo echo username:password | chpasswd

ssh -NL 8787:ec2-emr-master-node-ip.compute-1.amazonaws.com:8787 [email protected]&

sudo yum update

sudo yum -y install libcurl-devel

sudo -u hdfs hadoop fs -mkdir /user/

sudo -u hdfs hadoop fs -chown /user/

spark-submit --version

export SPARK_HOME='/usr/lib/spark/'

install.packages('devtools')

devtools::install_github('apache/[email protected]', subdir='R/pkg')

install.packages('sparklyr')

library(SparkR)

library(sparklyr)

Sys.setenv(SPARK_HOME='/usr/lib/spark')

sc <- spark_connect(master = 'yarn-client')

Joarder KamalJoarder Kamal

Now versions 2.1.2 and 2.3.0 of SparkR are now available in the repository of CRAN, you can install version 2.3.0 as follows:

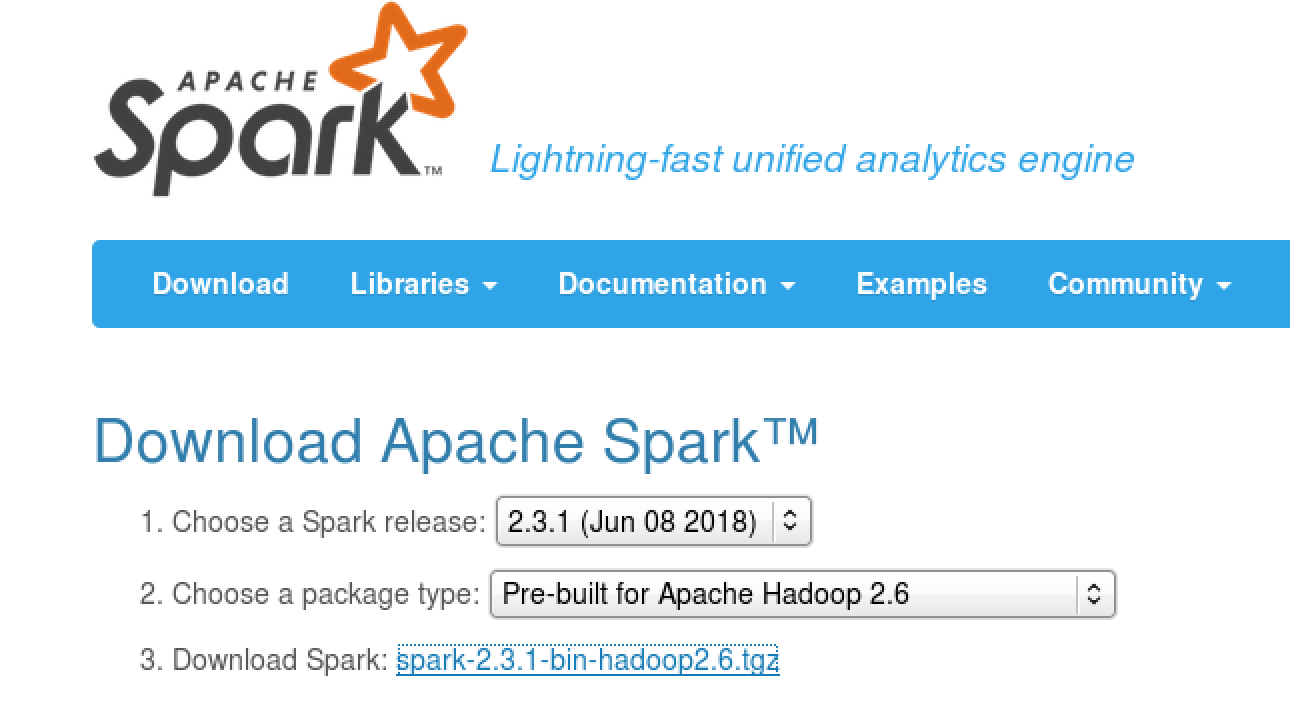

Note: You must first download and install the corresponding version of Apache Spark from download, so that the package works correctly.

Rafael DíazRafael Díaz

Not the answer you're looking for? Browse other questions tagged rapache-sparksparkr or ask your own question.Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

- Blog

- Home

- Radio Code Calculator

- Kmspico Windows 8 Pro Build 9600

- Total Gym Elite

- Major Crimes Season 6 Subtitles

- Quilt Design Software

- Streets Of Rage 2 Game Genie

- Waldo Loli Pack 3 (19 Deposit Rar

- Gino Sitson Torrent

- Fha Approved Condos

- Www Agenda Web. Org

- How To Upload Photos On Gdca

- Uru Ages Beyond Myst Download

RSS Feed

RSS Feed